A lap around of Big Data with Microsoft HDInsight

June 23, 2013 1 Comment

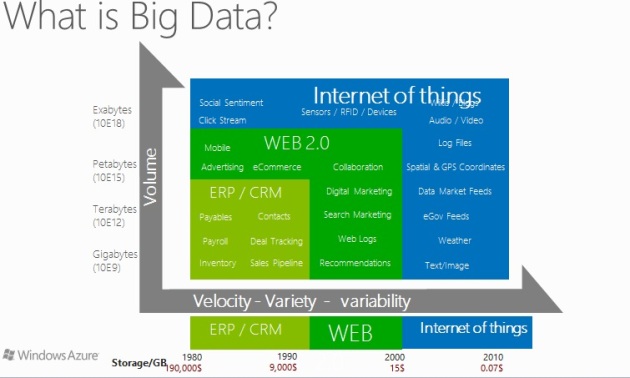

Big Data synonyms with three V s : Volume , Velocity & Variety. Even with traditional e-commerce system to modern social networks all systems data conservation is dependent on this platform. Lets check a scenario of modern e-commerce analytic s after integration with Big Data.

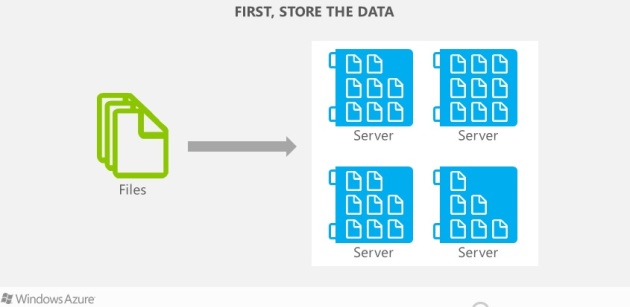

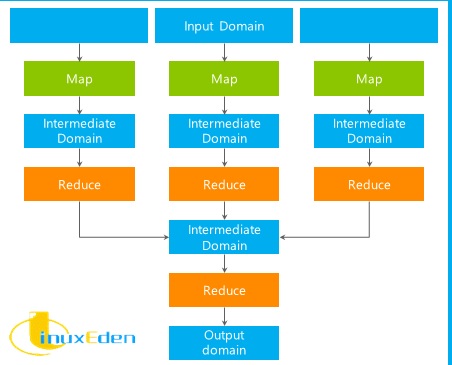

- Big Data platform typically works by storing data first into clusters , then process the data through MapReduce workflows which executes by Mapping the input data through independent chunks processed by appropriate algorithms, the output from Map phase then moves to Shuffle/Sorting phase & finally the output from Shuffle phase comes to Reduce phase as input.

- Lets check a typical Big Data MapReduce workflow.

- Microsoft’s BigData platform works exactly same way as a collaborative solution with Horton Works named as Microsoft HDInsight. Which typically simplifies the solution of running complex batch scripts. Lets cover a little insight of HDInsight/Hadoop ecosystem.

- Microsoft’s Big Data platform unveils solutions from storing data into HDFS to query processing on Hive up to implementing Business Intelligence analytics on Excel Powerpivot, Powerpivot, SSAS & SSRS solutions.

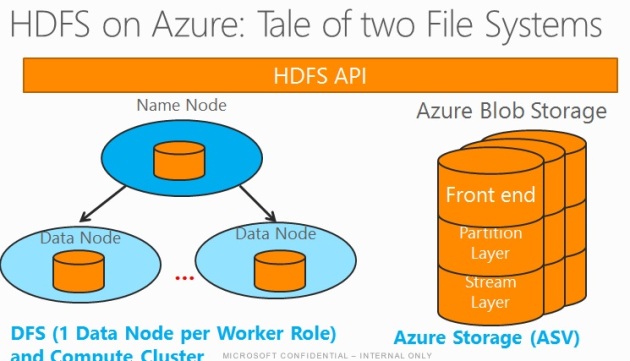

- Storing data into HDFS : Petabytes to Zetabytes of data to be stored in HDFS clusters by means of Name Node followed by Data Nodes, in Azure HDInsight each Data Node is integrated with a worker roles & compute cluster. Alternatively , you can leverage the solutions using Azure Blob Storage utilizing Front End(attaches OAuth/Security layer for authentication), Partition layer: for mapping with Azure Queue, table & blob storages , Stream layer : 3 layer HA for scaled out data stream.

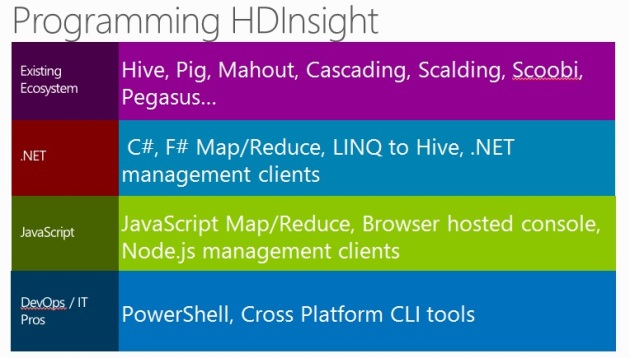

- In order to programming on HDInsight , you can opt for Java, C#, F#, .NET, .js API, LINQ to Hive APIs which leverages to code on hadoop ecosystems including hadoop pig, hive, mahout, cascading, pegasus.

You must be logged in to post a comment.